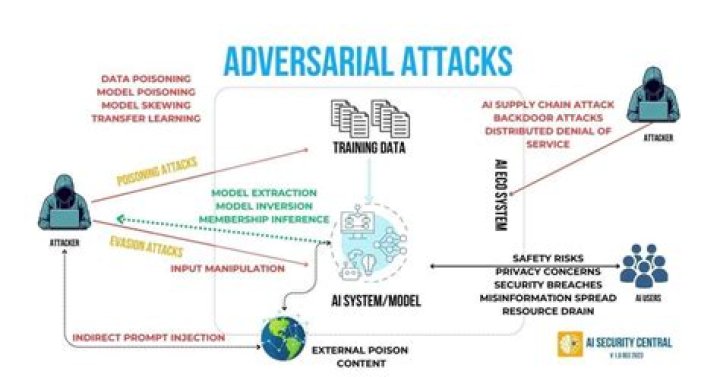

About Adversarial Attacks An adversarial attack consists of subtly modifying an original image in such a way that the changes are almost undetectable to the human eye. The modified image is called an adversarial image, and when submitted to a classifier is misclassified, while the original one is correctly classified..

Hereof, how does adversarial attack work?

Machine learning algorithms accept inputs as numeric vectors. Designing an input in a specific way to get the wrong result from the model is called an adversarial attack. No machine learning algorithm is perfect and they make mistakes — albeit very rarely.

Also, what is adversarial attack in machine learning? Adversarial machine learning is a technique employed in the field of machine learning which attempts to fool models through malicious input. This technique can be applied for a variety of reasons, the most common being to attack or cause a malfunction in standard machine learning models.

Subsequently, one may also ask, what is an adversarial example?

An adversarial example is an instance with small, intentional feature perturbations that cause a machine learning model to make a false prediction. Adversarial examples make machine learning models vulnerable to attacks, as in the following scenarios.

Why do adversarial examples exist?

In this world, adversarial examples occur because classifiers behave poorly off-distribution, when they are evaluated on inputs that are not natural images. Here, adversarial examples would occur in arbitrary directions, having nothing to do with the true data distribution.

Related Question Answers

What is a white box attack?

The white-box adversarial attacks describe scenarios in which the attacker has access to the underlying training policy network of the target model. The research found that even introducing small pertubations in the training policy can drastically affect the performance of the model.What is a black box attack?

An ATM black box attack, also referred to as jackpotting, is a type of banking-system crime in which the perpetrators bore holes into the top of the cash machine to gain access to its internal infrastructure.Are adversarial examples inevitable?

Abstract: A wide range of defenses have been proposed to harden neural networks against adversarial attacks. However, a pattern has emerged in which the majority of adversarial defenses are quickly broken by new attacks. We show that, for certain classes of problems, adversarial examples are inescapable.What is adversarial noise?

In the same way, optical illusions can trick the human brain, adversarial examples can trick neural networks. A small amount of carefully constructed noise was added to an image that caused a neural network to misclassify the image, despite the image looking exactly the same to a human.What are adversarial images?

It's possible to leverage this to design “adversarial images,” which are images that have been altered with a carefully calculated input of what looks to us like noise, such that the image looks almost the same to a human but totally different to a classifier, and the classifier makes a mistake when it tries toWhat is masking in machine learning?

Padding is used to insert 0s in shorter time series so that input and output are both the same length. This could cause error in training, and hence masking is used. The idea behind masking is to have two additional arrays that record whether an input or output is actually present for a given time step and exampl.How do you use adversarial in a sentence?

adversarial Sentence Examples. There is always another challenge for dragons to face and an adversarial group attempting to thwart their reign of terror. The problem of making mediation adversarial by attaching it to courts is also interestingly identified. adversarial proceedings.What is adversarial testing?

The adversarial testing methodology (see Figure 1) is a cyclic assessment process where the team's knowledge of a target evolves as new test cases and attack vectors are identified and incorporated.What is the adversarial process in court?

Adversarial process. An adversarial process is one that supports conflicting one-sided positions held by individuals, groups or entire societies, as inputs into the conflict resolution situation, typically with rewards for prevailing in the outcome. Often the form of the process assumes a game-like appearance.What is data poisoning?

A poisoning attack happens when the adversary is able to inject bad data into your model's training pool, and hence get it to learn something it shouldn't. Such attacks aim to inject so much bad data into your system that whatever boundary your model learns basically becomes useless.Are GANs deep learning?

Generative Adversarial Networks, or GANs, are a deep-learning-based generative model. More generally, GANs are a model architecture for training a generative model, and it is most common to use deep learning models in this architecture.What is deep learning AI?

Deep learning is a subset of machine learning in artificial intelligence (AI) that has networks capable of learning unsupervised from data that is unstructured or unlabeled. Also known as deep neural learning or deep neural network.What is gradient masking?

“Gradient masking” is a term introduced in Practical Black-Box Attacks against Deep Learning Systems using Adversarial Examples. to describe an entire category of failed defense methods that work by trying to deny the attacker access to a useful gradient.What is adversarial sample manufacturing?

Adversarial examples are inputs to machine learning models that an attacker has intentionally designed to cause the model to make a mistake; they're like optical illusions for machines. An adversarial input, overlaid on a typical image, can cause a classifier to miscategorize a panda as a gibbon.