Iteration. An iteration describes the number of times a batch of data passed through the algorithm. In the case of neural networks, that means the forward pass and backward pass. So, every time you pass a batch of data through the NN, you completed an iteration..

Also, what is iteration in machine learning?

An iteration is a term used in machine learning and indicates the number of times the algorithm's parameters are updated. Exactly what this means will be context dependent. A typical example of a single iteration of training of a neural network would include the following steps: processing the training dataset batch.

One may also ask, what is difference between epoch and iteration? Iteration is one time processing for forward and backward for a batch of images (say one batch is defined as 16, then 16 images are processed in one iteration). Epoch is once all images are processed one time individually of forward and backward to the network, then that is one epoch.

Beside above, what is an epoch in neural network?

In terms of artificial neural networks, an epoch refers to one cycle through the full training dataset. Usually, training a neural network takes more than a few epochs. Iterations is the number of batches or steps through partitioned packets of the training data, needed to complete one epoch.

What is epoch and batch size in neural network?

The batch size is a number of samples processed before the model is updated. The number of epochs is the number of complete passes through the training dataset. The size of a batch must be more than or equal to one and less than or equal to the number of samples in the training dataset.

Related Question Answers

What is Batch_size?

batch_size denotes the subset size of your training sample (e.g. 100 out of 1000) which is going to be used in order to train the network during its learning process. Each batch trains network in a successive order, taking into account the updated weights coming from the appliance of the previous batch.What is iteration in coding?

Iteration, in the context of computer programming, is a process wherein a set of instructions or structures are repeated in a sequence a specified number of times or until a condition is met. When the first set of instructions is executed again, it is called an iteration.What is steps per epoch?

An epoch usually means one iteration over all of the training data. For instance if you have 20,000 images and a batch size of 100 then the epoch should contain 20,000 / 100 = 200 steps.What is epoch in ML?

epoch. epoch. A term that is often used in the context of machine learning. An epoch is one complete presentation of the data set to be learned to a learning machine. Learning machines like feedforward neural nets that use iterative algorithms often need many epochs during their learning phase.Is machine learning an iterative process?

Machines can also learn this way and this is called 'Iterative machine learning'. In most cases, iteration is an efficient learning approach that helps reach the desired end results faster and accurately without becoming a resource crunch nightmare.What is iteration in deep learning?

An iteration is a term used in machine learning and indicates the number of times the algorithm's parameters are updated. Exactly what this means will be context dependent. A typical example of a single iteration of training of a neural network would include the following steps: processing the training dataset batch.How many epochs are there?

Note: The number of batches is equal to number of iterations for one epoch. Let's say we have 2000 training examples that we are going to use . We can divide the dataset of 2000 examples into batches of 500 then it will take 4 iterations to complete 1 epoch.What is batch learning?

Batch means a group of training samples. In gradient descent algorithms, you can calculate the sum of gradients with respect to several examples and then update the parameters using this cumulative gradient. If you 'see' all training examples before one 'update', then it's called full batch learning.What is Adam Optimizer?

Adam [1] is an adaptive learning rate optimization algorithm that's been designed specifically for training deep neural networks. The algorithms leverages the power of adaptive learning rates methods to find individual learning rates for each parameter.What is the oldest epoch?

In geochronology, an epoch is a subdivision of the geologic timescale that is longer than an age but shorter than a period. The current epoch is the Holocene Epoch of the Quaternary Period. Rock layers deposited during an epoch are called a series.What is Overfitting in neural network?

One of the problems that occur during neural network training is called overfitting. The error on the training set is driven to a very small value, but when new data is presented to the network the error is large. The network has memorized the training examples, but it has not learned to generalize to new situations.How do you pronounce epoch time?

I pronounce the word 'epoch' by using the four sounds. The first one is a front close vowel sound /i:/, next is /p/ which is followed by back just above open rounded vowel as in pot, and the last is a consonant sound /k/. 'Epoch' has two syllables.What is epoch in physics?

An epoch, in physics, refers to the displacement acquired by an oscillating body when the time is, t = 0.What is epoch TensorFlow?

An epoch, in Machine Learning, is the entire processing by the learning algorithm of the entire train-set. The MNIST train set is composed by 55000 samples. Once the algorithm processed all those 55000 samples an epoch is passed. Epoch is not something intrinsic to the TensorFlow framework.What is activation function in neural network?

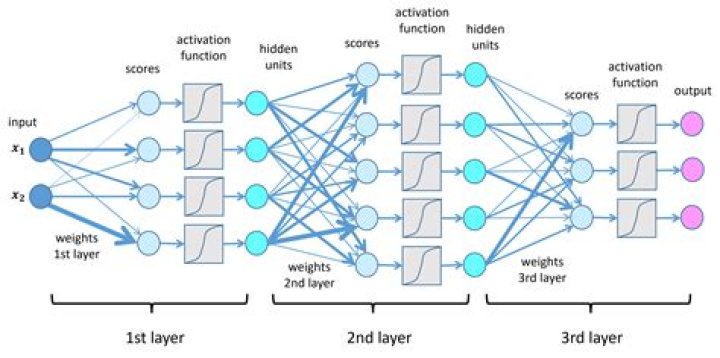

Activation functions are mathematical equations that determine the output of a neural network. The function is attached to each neuron in the network, and determines whether it should be activated (“fired”) or not, based on whether each neuron's input is relevant for the model's prediction.Is bigger batch size better?

To conclude, and answer your question, a smaller mini-batch size (not too small) usually leads not only to a smaller number of iterations of a training algorithm, than a large batch size, but also to a higher accuracy overall, i.e, a neural network that performs better, in the same amount of training time, or less.Does batch size affect accuracy?

Batch size controls the accuracy of the estimate of the error gradient when training neural networks. Batch, Stochastic, and Minibatch gradient descent are the three main flavors of the learning algorithm. There is a tension between batch size and the speed and stability of the learning process.What is a Softmax classifier?

The Softmax classifier gets its name from the softmax function, which is used to squash the raw class scores into normalized positive values that sum to one, so that the cross-entropy loss can be applied.How many years is a epoch?

Earth's geologic epochs—time periods defined by evidence in rock layers—typically last more than three million years. We're barely 11,500 years into the current epoch, the Holocene.